Why Security Teams Keep Trusting Geo-Blocking When Attackers Don't Care

Your SSO has geo-blocking. Your WAF has geo-blocking. Your firewall has geo-blocking. Every security vendor ships it as a feature because it's easy to explain to executives and simple to implement.

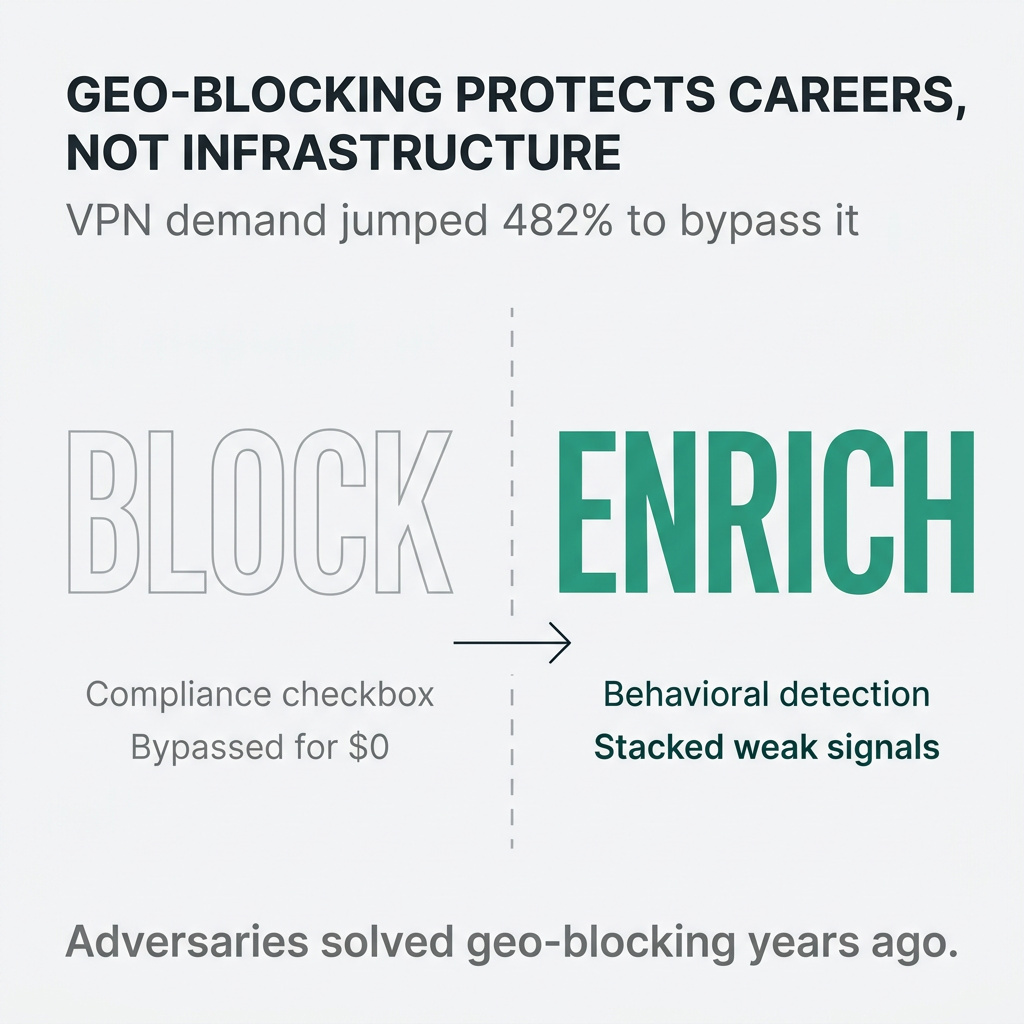

The problem? Adversaries solved geographic restrictions years ago.

I'm not saying geo-blocking has zero value. It stops the most obvious concerns: automated scanners, opportunistic attacks, script kiddies probing from known bad regions. Something, not nothing.

But not much.

The Bypass Problem Nobody Wants to Admit

Look at what happened when Texas enforced age verification laws for adult content. VPN demand jumped 275% overnight. In Montana, it spiked 482%.

Teens and grandparents bypass geographic restrictions with free consumer technology. Your adversaries aren't even breaking a sweat.

The infrastructure cost argument falls apart when you look at the economics. Residential proxy services now offer access to 200-350 million residential IPs across 195 countries. The market has commoditized to the point where prices dropped 70% in two years.

For attackers, this isn't a barrier. It's a shared commodity cost.

Adversaries solved geographic blocking so long ago it's not even a speed bump.

Why Organizations Keep Building Around It Anyway

If geo-blocking is this easy to bypass, why do security teams keep architecting entire policy frameworks around it?

Two reasons: compliance and career protection.

Many regulations require some form of geographic access control. Companies implement geo-IP blocking to check the box, knowing full well this won't stop a determined adversary. The alternative is worse: not implementing it and explaining why after a breach is career suicide.

Nobody wants to be the person who gets asked post-compromise: "Why didn't you block foreign IPs?"

For architecture teams, the cost of applying this basic defense is minimal. For attackers, the cost of defeating it is equally minimal. Both sides accept the theater because the consequences of not participating are worse than the ineffectiveness of the control itself.

It's a checkbox protecting careers more than infrastructure.

What Actually Matters: Enrichment Over Blocking

Here's the distinction: blocking connections in real-time based on geography has minimal value. Geo-IP enrichment for detection and risk scoring? Works.

That's why we built it into ziggiz. Yet another problem your legacy vendor hopes you continue to believe can’t be solved

IP addresses change ownership and usage patterns over time. What matters isn't where an IP claims to be from but the behavioral context around the connection.

Take a business-to-employee scenario:

An employee logging in from a consumer home internet connection? Normal.

Same employee logging in from a mobile provider? Also normal, even though mobile geolocation reports hundreds of miles away from their position. They're not using teleporters, but they were also never exactly where the geo data says they are.

Employee switching between two different home internet providers in the same day? Not normal, but on its own, not actionable.

Employee connecting from two home providers and the office within a short timeframe? Worth investigating.

This is where enrichment at ingest-time becomes critical. You're not blocking based on a single signal. You're classifying the context of each session (B2E, B2B, B2C), applying anomaly detection across multiple weak signals.

The Myth of Impossible Travel Detection

Financial institutions have been running "impossible travel" detection for years. Everyone assumes they've figured this out.

They haven't.

Impossible travel detection has never been reliable in the modern multi-device, multi-browser, globally mobile world. VPN gateways change due to load balancing. Dynamic IP allocation shifts constantly. Remote work means employees legitimately connect from different locations throughout the day.

The false positive problem is severe enough. Most organizations either tune these alerts into uselessness or ignore them entirely.

What financial fraud teams do: stack multiple risk factors together. When a user logs in from a second home connection, the system doesn't block them. It asks for verification through a secure secondary channel. Maybe a Slack message for employees or a push notification to a known device.

Then you layer in other behavioral signals.

What if the same user suddenly accesses an unusual number of customer records in your CRM? Second high-noise detection. Most teams would ignore this in isolation.

Two weak signals together? Worth investigating.

Add a third signal: the user is on an improvement plan or underperforming.

Why Legacy SIEMs Can't Do This

Most security teams don't have the infrastructure to implement multi-signal behavioral detection.

Their geo-IP data lives in one tool. Authentication logs in another. CRM access logs somewhere else entirely. Correlating these signals in real-time means building a Rube Goldberg machine of integrations. Each one fragile and expensive to maintain.

The fundamental problem: traditional SIEMs don't enrich data at ingest time. They rely on third-party bolt-on solutions working to varying degrees of success. Even when they work, they don't semantically apply enrichment conditions.

You have to manually map every field containing an IP address across every log source. Firewall logs call it "src_ip." Authentication logs call it "source_address." Proxy logs call it "client_ip." You get charged for all the additional bytes on ingest for every single source. Turning this on increases your costs by 40% overnight.

In a traditional pipeline, you're building this mapping by hand for every single data source.

Time-consuming, easily broken, and expensive. When a vendor changes their log format (happens constantly), your enrichment pipeline breaks until someone manually fixes the field mappings.

SOC analysts face hundreds or thousands of daily alerts. Most are false positives. The system doesn't distinguish signal from noise because it lacks the contextual enrichment to make intelligent decisions.

The Semantic Approach: Enrichment That Scales

A semantic data model solves this by understanding what fields represent, not what they're named.

When you tell the system to "enrich IP addresses," it identifies every field across every log source containing an IP. Doesn't matter what the field is called. No manual mapping. No fragile pipelines breaking when vendors update their schemas.

Our Lakehouse architecture at ziggiz applies temporal enrichment at ingest time. We partner with IPinfo to provide consistent context enrichment across all your cyber data sources. You find threats in data sources remaining inaccessible to legacy vendors.

This isn't about replacing your existing tools. It's about adding the layer making those tools useful.

You keep your geo-blocking checkboxes in your SSO, WAF, and firewall. Those still serve their purpose for compliance and stopping the most obvious automated threats.

But you stop relying on them for real detection.

Beyond Geography: Connection Context That Matters

Geo-blocking is the beginning. Once you have semantic enrichment working, you layer in other connection attributes.

Consumer VPNs. Residential proxies. Tor exit nodes. Hosting provider IPs. Mobile carrier networks. Corporate VPN gateways.

Each of these is a weak signal on its own. Combined with behavioral patterns and contextual information about the session type (B2E, B2B, B2C), they become strong.

A B2E connection from a residential proxy? High risk.

A B2C connection from a residential proxy? Depends on your business model, but potentially normal.

A B2B connection suddenly shifting from a corporate IP to a consumer VPN mid-session? Worth investigating.

The semantic approach lets you apply these conditions qualitatively and contextually. Managing the noise while finding the true signal.

Making Detection Work Without Elite Teams

The question I get most often: does this require an elite security engineering team to implement?

No.

From our customers' perspective, this works. The complexity lives in the platform architecture, not in the operational burden on security teams.

That's the entire point of building commodity infrastructure for security analytics. Organizations see 60-80% reductions in mean time to investigate when they implement automated enrichment workflows correlating security data into a unified view.

You don't need PhDs on staff. You don't need years of detection engineering experience. You need infrastructure accelerating analysts instead of constraining them.

Detection is engineering, not intuition.

The Real Shift: From Theater to Engineering

The fundamental mindset shift isn't about abandoning geo-blocking. It's about understanding what security controls do versus what they're supposed to do.

Geo-blocking stays useful for compliance, for stopping automated scanning, for marginally increasing adversary costs. Keep those checkboxes checked.

But stop building your detection architecture around the assumption geography matters to adversaries. They solved the problem years ago.

Start building around behavioral patterns, contextual enrichment, and multi-signal correlation. Build on infrastructure semantically understanding your data regardless of vendor schemas. Detection needs to survive technology shifts and vendor churn.

Build on a cyber lakehouse architecture making sophisticated detection accessible to organizations without elite teams.

The adversaries aren't logging in from home. Your defenses shouldn't assume they are.